Your onboarding flow is where users decide whether to stay or leave. A broken signup button, confusing form field, or unresponsive verification step means they're gone. User onboarding testing catches these issues before they ship, but most tools create more problems than they solve. Traditional test frameworks use DOM selectors that break every time you update your UI, which means you're constantly rewriting tests instead of improving your product. We're looking at the tools that can actually handle complex onboarding sequences without falling apart when your interface changes.

TLDR:

- Vision-based testing adapts to UI changes without breaking, while DOM selector tools fail

- 75% of users abandon products that are too hard to understand within a week

- Docket catches UX friction (unresponsive buttons, confusing flows) that cause drop-off

- Tests written in plain English run in days vs month long setup timelines for managed services

- Docket uses coordinate-based AI agents to test onboarding flows like a human would

What is End-to-End Testing for User Onboarding

End-to-end testing for user onboarding validates the complete journey from first interaction through activation: account creation, email verification, profile setup, and initial feature interaction.

97% of companies say good user onboarding is necessary for product growth, yet most teams rely on manual testing or incomplete automation that misses critical failure points. When onboarding breaks, users don't file bug reports. They leave.

Poor onboarding ranks as the third most important reason customers churn. 75% of users abandon a product if it's too hard to understand within a week. This narrow window makes testing critical.

E2E tests simulate real user behavior across your entire onboarding sequence. They catch broken signup forms, unresponsive buttons, confusing navigation, and failed API calls. Unlike unit tests that validate individual functions or integration tests that check component connections, E2E tests verify the actual experience users encounter.

How We Ranked End-to-End Testing Tools for Onboarding

We evaluated tools based on criteria that matter for onboarding flows, not generic test automation features. Onboarding sequences involve multiple steps, form submissions, email verifications, and dynamic UI changes that break traditional test frameworks.

Our ranking considered:

- Test creation speed: How quickly can you build a complete onboarding test without writing code?

- Maintenance burden: Do tests break when UI changes, or do they adapt automatically?

- Multi-step flow handling: Can the tool navigate complex sequences like signup, verification, profile setup, and initial activation?

- UX issue detection: Does it catch confusing interfaces or unresponsive elements that would frustrate real users?

- CI/CD integration: Can tests run automatically on every deploy?

- Time-to-value: How long until you have reliable test coverage?

- Learning curve: Can non-engineers create and maintain tests?

Tools that require constant selector updates or fail on minor UI changes scored lower, regardless of their feature lists.

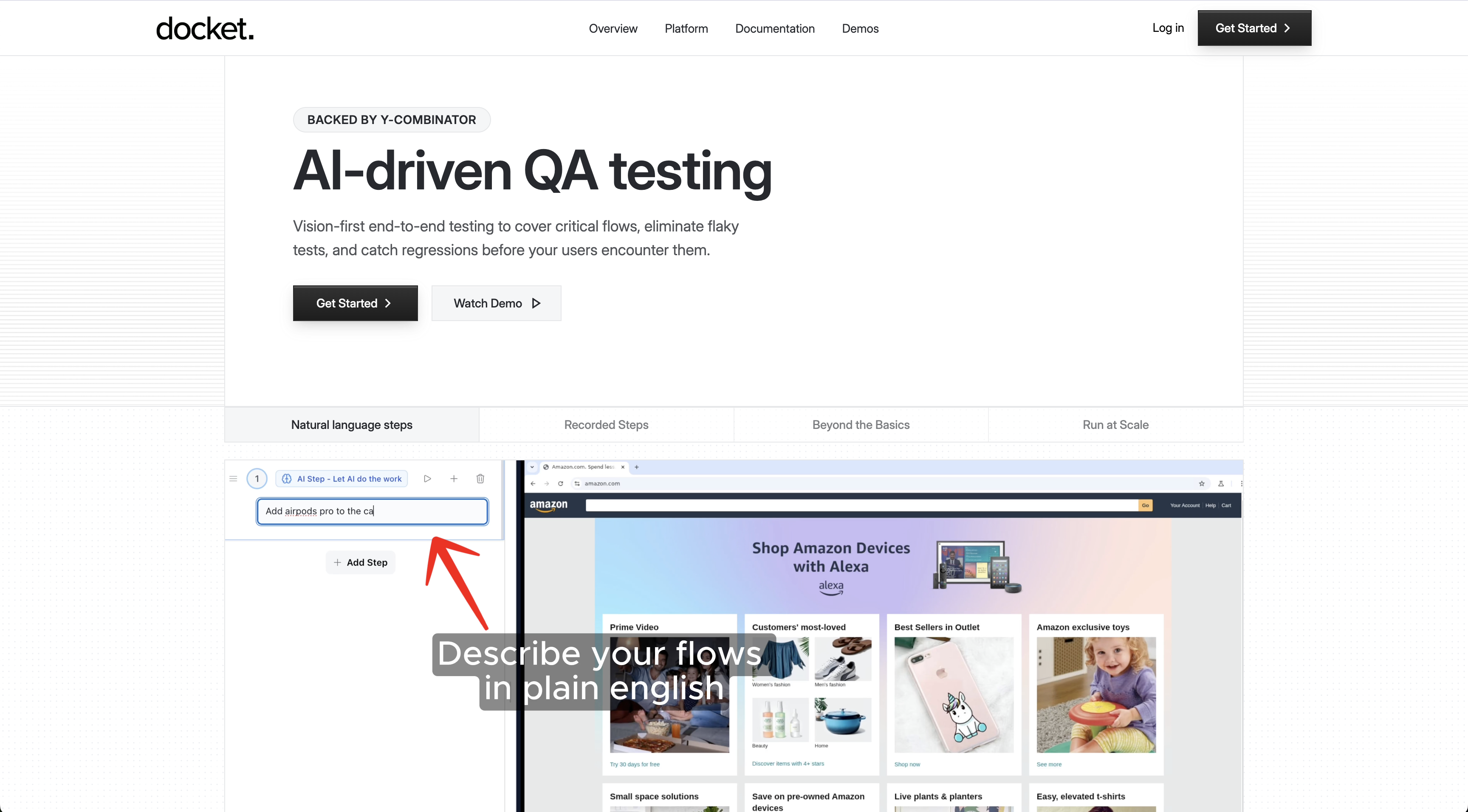

Best Overall End-to-End Testing Tool for Onboarding: Docket

Docket tests onboarding flows through coordinate-based interaction rather than DOM selectors. AI agents navigate your application by clicking buttons and filling forms based on what they see on screen, which removes the selector brittleness that breaks traditional tools when signup forms or profile setup screens change.

Tests are written in plain English. Describe your onboarding flow (signup, email verification, profile creation, first feature interaction) and the agents execute it without code. Product managers and designers can validate onboarding experiences directly.

The vision-based approach catches UX issues that technical checks miss:

- If a button is unresponsive, a form field is confusing, or a step would frustrate a real user, Docket flags it as a failure

- Tests verify whether users can actually succeed, not just whether elements exist in the DOM

- Tests self-heal as your onboarding evolves, so redesigned signup flows or adjusted profile setup steps don't require updating selectors or rewriting test scripts

Docket integrates with CI/CD pipelines and provides visual bug reports with screenshots, video recordings, and context for debugging.

QA Wolf

QA Wolf is a managed testing service that builds end-to-end test suites using Microsoft's Playwright.

What they offer

- 80% automated test coverage in 4 months

- Human QA engineers write and maintain tests

- Zero flake guarantee with human verification

- CI/CD integration and parallel test execution

Good for: Teams wanting full-service test creation without internal QA resources and who can wait months for complete coverage.

Limitation: QA Wolf relies on traditional DOM-based Playwright scripts that still require ongoing maintenance when onboarding flows change, despite their human support model. The 4-month timeline to reach full coverage means critical onboarding bugs could ship during the lengthy setup period.

Bottom line: A managed service that removes testing burden from internal teams but uses traditional automation approaches that don't solve test brittleness.

testRigor

testRigor is a no-code testing solution that lets users write tests in plain English.

What they offer

- Plain English test creation that converts natural language into executable tests

- Self-healing tests designed to adapt when UI elements change

- Cross-browser and mobile testing coverage

- AI-powered test generation from text descriptions

Good for: Non-technical teams that need to build tests without writing code or learning complex frameworks.

Limitation: testRigor still relies on DOM-based element identification under the hood. When onboarding flows change (new form fields, reordered steps, updated copy), tests break despite the plain English interface when the selectors change. Complex user scenarios require manual scripting, and the tool doesn't analyze actual user experience problems like confusing navigation or unclear CTAs that cause onboarding drop-off.

Bottom line: Lowers the barrier to test creation but doesn't fix the core problem of maintaining reliable tests for fast-changing onboarding interfaces.

Virtuoso QA

Virtuoso QA offers scriptless test authoring with AI-assisted maintenance for web and mobile apps.

What they offer

- No-code test creation through a visual interface that lets non-technical team members build tests

- Self-healing capabilities that automatically adjust when UI elements change

- Natural language test writing for assertions and validation steps

- Cross-browser and cross-device execution to verify functionality across environments

Good for: Teams that want visual test authoring without writing code and need automated maintenance across browsers and devices.

Limitation: Virtuoso validates that elements exist and functions execute, but won't catch UX issues that drive onboarding abandonment. Tests still rely on element selectors that break during redesigns or A/B tests of signup variations.

Bottom line: Accessible test creation, but no visibility into the experience problems that cause users to drop off during signup.

Reflect

Reflect is a cloud-based testing tool built for browser-based test creation without local setup.

What they offer

- Record tests in the browser without installing frameworks or setting up test environments

- Schedule tests to run at set intervals

- Share and manage tests across teams

- Integrate with CI/CD pipelines for automated test runs on deployments

Good for: Small teams that want quick test setup without managing infrastructure or learning test frameworks.

Limitation: Reflect relies on element-based selectors that break when onboarding flows change. Redesigning your signup form, adjusting profile setup steps, or running A/B tests on your activation flow all require manual test updates. The tool checks functional correctness but doesn't surface UX friction points that cause users to drop off during onboarding.

Bottom line: Fast setup for basic functional testing, but lacks the resilience and UX analysis needed for complex onboarding flows.

Feature Comparison Table of End-to-End Testing Tools

Docket uses coordinate-based vision recognition instead of DOM selectors. When signup forms or profile screens change during redesigns or A/B tests, vision-based tests continue running without updates while selector-based tools break and require manual fixes.

Why Docket is the Best End-to-End Testing Tool for Onboarding

Onboarding tests need to evolve with your product. When you redesign signup forms, adjust activation flows, or A/B test profile creation steps, coordinate-based testing continues working while DOM selector tools require manual updates to every affected test.

Docket evaluates whether users can actually succeed, not just whether technical operations complete. If a button is unresponsive, a form field is confusing, or navigation would frustrate someone trying to activate their account, the test fails. This catches the experience problems that drive abandonment, not just broken API calls.

Vision-based testing removes the maintenance burden that makes traditional E2E tools unsustainable. Tests written in plain English continue validating your onboarding experience as your product evolves, without constant selector updates or framework expertise.

Final thoughts on automating user onboarding tests

User experience testing for onboarding needs to catch the problems that cause abandonment, not just verify that API calls succeed. Vision-based testing evaluates whether users can actually complete your signup and activation flows. When your onboarding evolves, your tests should adapt automatically instead of breaking and requiring manual fixes.

FAQ

How long does it take to set up end-to-end tests for an onboarding flow?

With vision-based tools, you can have working tests in hours by describing your flow in plain English. Traditional DOM-based tools require days or weeks to write selectors, handle edge cases, and debug flaky tests across different environments.

What's the difference between DOM-based and coordinate-based testing?

DOM-based testing relies on CSS selectors or element IDs that break when your UI changes. Coordinate-based testing is relies on user intent and uses visual recognition to interact with elements by their on-screen position and appearance, so tests continue working when you redesign forms or adjust layouts.

When should I automate onboarding tests instead of testing manually?

Automate when you're shipping weekly or daily releases, running A/B tests on signup flows, or when manual testing can't keep pace with your deployment frequency. Manual testing works for early-stage products with infrequent changes, but becomes a bottleneck as velocity increases.

Can end-to-end testing catch UX problems that cause users to abandon onboarding?

Most tools only verify technical correctness (element exists, API returns 200). Vision-based testing evaluates whether a real user could succeed, flagging unresponsive buttons, confusing navigation, or unclear CTAs that drive abandonment even when the code works correctly.

Why do traditional E2E tests break when I update my signup form?

Traditional tests reference specific DOM selectors (CSS classes, IDs, XPath). When you change form layouts, add fields, or adjust styling, those selectors no longer match and tests fail. This requires manually updating every affected test after each UI change.

.svg)

.svg)

.png)

.png)

.svg)

.png)