Everyone talks about AI in testing, but most tools are still using the same DOM selectors that have been breaking your tests for years. Intelligent QA agents should understand your application through visual context, not hardcoded element IDs. If you're shopping for a testing solution, you need to know which ones are genuinely autonomous and which ones will have you rewriting tests after every UI change.

TLDR:

- AI testing agents use vision and coordinates to interact with web apps, not DOM selectors

- Vision-based tools survive UI refactors without breaking, unlike Selenium or Cypress

- Most tools added AI to existing DOM frameworks; only coordinate-based agents avoid selector maintenance

- Docket uses vision-first architecture to test web apps through coordinates and visual recognition

- Self-healing tests adapt to UI changes automatically, eliminating post-deployment test fixes

What Are AI Testing Agents?

AI testing agents are autonomous programs that interact with web applications through a browser to validate functionality and catch bugs. They make decisions during test execution based on their understanding of the application state and user intent, rather than executing predefined scripts with hardcoded selectors.

How They Differ from Traditional Test Automation

Traditional tools like Selenium or Cypress rely on CSS selectors or XPath queries that break when developers change class names or DOM structure. AI testing agents use computer vision, coordinate-based interaction, or semantic understanding to identify elements the way humans do.

When a button moves or a workflow changes, an intelligent agent can still complete the test by recognizing the element's purpose and context rather than its exact location in the code. This autonomy means these tools can explore applications, generate test cases, or heal broken tests without constant human intervention.

How We Ranked AI Testing Agents

We evaluated AI testing agents based on six criteria that matter to engineering teams shipping web applications:

- Architecture approach: Whether the agent uses vision and coordinates to interact with UI elements, or relies on DOM selectors that break when markup changes

- Autonomy level: How much the agent can decide on its own versus requiring explicit step-by-step instructions

- Self-healing capability: Whether tests automatically adapt to UI changes or require manual updates after every deployment

- Test authoring: If you can describe tests in natural language or must write code

- Maintenance overhead: Time spent fixing broken tests versus time spent writing new ones

- CI/CD compatibility: How easily the tool integrates into existing deployment pipelines

These factors directly impact how quickly you can build test coverage and how much effort it takes to maintain that coverage over time.

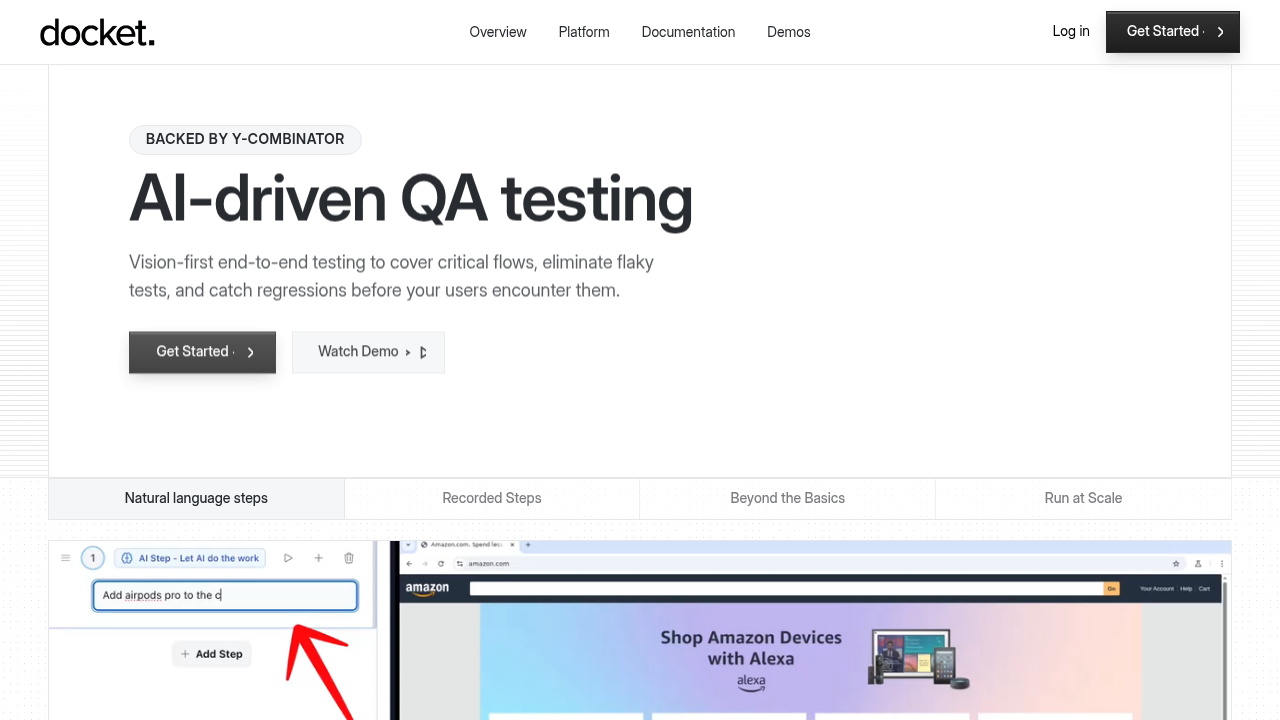

Best Overall AI Testing Agent: Docket

Docket interacts with web applications through coordinates and visual recognition rather than DOM selectors. Tests continue working when frontend teams refactor class names, restructure components, or modify element IDs.

You describe test scenarios in plain English. The AI agent executes tests by visually identifying elements and interacting with them through coordinate-based actions. When UI changes occur, the agent adapts without throwing selector errors. Test failures generate bug reports with screenshots, console logs, network traces, and reproduction steps.

The step recorder allows you to record exact (X,Y) coordinate clicks and save common flows like login or checkout. These flows can then be reused across multiple tests without requiring code.

The vision-first architecture handles dynamic UIs, canvas elements, and interactions that cause traditional selector-based tools to fail.

Mabl

Mabl provides AI-driven testing workflows and automated test generation from user stories. The tool handles cross-browser and mobile testing scenarios, with built-in integrations for CI/CD pipelines.

Tests run automatically and Mabl attempts to handle changes in your application. However, the underlying architecture depends on DOM elements rather than visual recognition. When your frontend team refactors components or modifies element attributes, tests still reference the old structure and break.

Mabl added AI features onto an existing DOM-based framework rather than building vision-first from the ground up. You'll spend more time maintaining tests after UI changes compared to coordinate-based alternatives.

Applitools

What They Offer

Applitools uses AI to catch visual regressions across browsers and devices. The visual AI distinguishes real UI changes from false positives caused by dynamic content like timestamps or animations.

It works with Selenium, Cypress, and Playwright, adding visual checkpoints to existing test suites. You can record flows or describe tests in natural language.

Limitation

Applitools compares screenshots to detect visual bugs. It doesn't execute functional test flows or click through user journeys on its own. You'll need separate tools to handle the automation logic.

Bottom Line

Applitools answers "does this look right?" but won't replace your end-to-end testing setup. Teams use it alongside functional testing tools, not as a standalone solution.

Katalon

What They Offer

Katalon received recognition as a Visionary in the 2025 Gartner Magic Quadrant and serves enterprise customers across web, API, mobile, and desktop testing from a single interface. The tool includes built-in test case management and reporting, and its low-code interface reduces scripting compared to raw Selenium or Cypress.

Limitation

Katalon relies on element locators rather than visual recognition. Teams still handle technical setup and selector maintenance when UI changes occur.

Bottom Line

Katalon works for teams needing multi-channel test coverage but requires more technical expertise than autonomous AI agents.

testRigor

What They Offer

testRigor generates tests from plain English descriptions and records user actions through a Chrome extension. You write commands like "click on the login button" and the agent executes them across browsers. The tool integrates with CI/CD pipelines and supports cross-browser testing.

Limitation

testRigor interprets plain English commands rather than using vision to understand application state. You still specify each interaction step manually instead of describing an objective and letting the agent determine how to achieve it. When UI changes occur, you rewrite commands to match the new interface.

Bottom Line

testRigor reduces scripting but doesn't provide true autonomy. You're trading code for English instructions, not eliminating the need to map out each action.

Tricentis

What They Offer

Tricentis provides test automation with model-based testing and Vision AI for UI validation. The tool handles legacy applications and includes risk-based test optimization that prioritizes execution based on code changes. Application lifecycle management features support organizations with compliance and reporting requirements.

Limitation

Tricentis added AI capabilities to an existing DOM-based architecture. Tests still depend on element selectors rather than understanding the interface through coordinates and visual context.

Bottom Line

Tricentis delivers enterprise features but requires the same selector maintenance overhead as other DOM-based tools.

Feature Comparison Table of AI Testing Agents

Coordinate-based automation is the key differentiator. When tests interact through visual coordinates instead of DOM selectors, they survive frontend refactors without breaking.

Why Docket Is the Best AI Testing Agent

The test automation market is growing rapidly, projected to reach $55.2 billion by 2028. Most tools still rely on DOM selectors that break when UI changes.

Docket uses vision-first architecture that interacts with applications through coordinates instead of selectors. When frontend teams refactor components or change class names, tests continue running because they understand visual context rather than code structure.

Other tools added AI features onto existing DOM-based frameworks. Docket's autonomous agents test by seeing the interface and understanding intent. You describe what should happen, and the agent determines how to achieve it.

This approach reduces time spent fixing broken selectors after deployments.

Final thoughts on AI testing agents and test automation

Most intelligent QA tools added AI features onto existing DOM-based frameworks. The architecture matters more than the AI layer because selector-based tests break regardless of how smart the healing logic is. Vision-first testing solves the root problem by removing the dependency on markup structure. Your tests work as long as the UI looks the same, not as long as the code stays frozen.

FAQ

What's the difference between coordinate-based and DOM-based testing?

Coordinate-based testing interacts with UI elements through visual recognition and x-y coordinates, while DOM-based testing relies on CSS selectors or XPath queries. When your frontend team refactors code or changes class names, coordinate-based tests continue working because they identify elements by visual context rather than code structure.

How much time does it take to set up AI testing agents?

Most vision-based AI agents can start running tests within hours of setup, requiring only a URL to your web application. Traditional DOM-based tools typically need days or weeks for configuration, test script writing, and selector mapping before you see results.

Do AI testing agents replace manual QA entirely?

AI testing agents dramatically reduce the need for manual QA, but they don’t fully replace it. Platforms like Docket can automate regression tests and even perform exploratory-style testing by navigating the UI, uncovering flows, and detecting unexpected behavior. This eliminates much of the repetitive work that slows teams down.

But human QA is still crucial for product judgment: evaluating UX, interpreting ambiguous results, prioritizing issues, and understanding real user expectations. AI handles execution and exploration at scale, while humans guide decisions and context. Together, they produce faster, deeper, and more reliable software quality.

Why do tests break after UI changes with traditional tools?

Traditional tools reference specific DOM elements through selectors like .login-button or #checkout-form. When developers rename classes, restructure components, or modify IDs during refactoring, those selectors no longer match and tests fail even though the functionality works correctly.

Can AI testing agents handle dynamic or canvas-based interfaces?

Vision-based agents can test canvas elements, dynamically generated content, and complex UIs because they interact through visual recognition rather than DOM queries. DOM-based tools struggle with these interfaces since canvas elements don't expose traditional selectors for automation frameworks to target.

.svg)

.svg)

.png)

.png)

.png)

.svg)

.png)